It’s almost really hard to don’t forget a time right before people today could change to “Dr. Google” for health-related advice. Some of the information and facts was erroneous. Significantly of it was terrifying. But it helped empower patients who could, for the to start with time, investigate their personal indicators and learn much more about their circumstances.

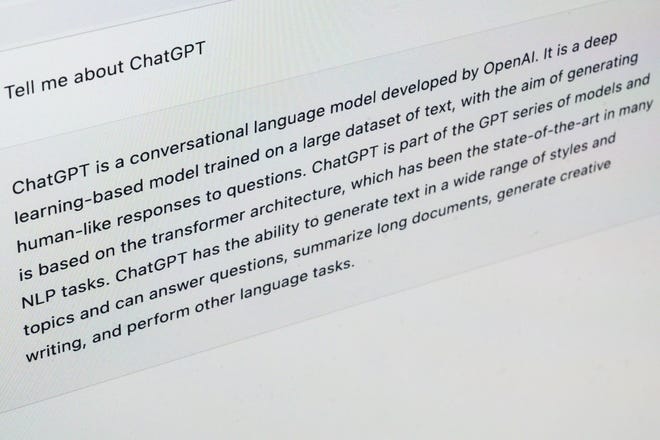

Now, ChatGPT and similar language processing applications promise to upend medical care yet again, providing patients with extra data than a straightforward on the internet lookup and conveying situations and remedies in language nonexperts can comprehend.

For clinicians, these chatbots could possibly deliver a brainstorming device, guard towards problems and relieve some of the burden of filling out paperwork, which could reduce burnout and allow more facetime with people.

But – and it really is a huge “but” – the details these digital assistants provide might be far more inaccurate and misleading than simple web queries.

“I see no possible for it in drugs,” mentioned Emily Bender, a linguistics professor at the College of Washington. By their really layout, these significant-language systems are inappropriate resources of health-related data, she explained.

Other individuals argue that big language styles could supplement, however not switch, major care.

“A human in the loop is still quite significantly wanted,” said Katie Hyperlink, a equipment finding out engineer at Hugging Experience, a corporation that develops collaborative machine finding out resources.

Url, who specializes in overall health care and biomedicine, thinks chatbots will be practical in drugs someday, but it isn’t really nonetheless all set.

And no matter whether this technology should be offered to sufferers, as effectively as physicians and scientists, and how much it must be controlled stay open up issues.

Regardless of the debate, there is minor question such systems are coming – and speedy. ChatGPT

Our guide to buying wellness insurance for 2015 walks you through how overall health insurance coverage operates and how to get well being insurance coverage plans under Obamacare. Red counties much more dependent upon the fundamentals face cuts in health and education – cuts that force compromises in those who educate or provide well being care. A single purpose that healthcare and government regulations are complicated is since we have a complex nation,” Washington, head of the Workplace of the National Coordinator for Health IT, told Healthcare Dive.

Our guide to buying wellness insurance for 2015 walks you through how overall health insurance coverage operates and how to get well being insurance coverage plans under Obamacare. Red counties much more dependent upon the fundamentals face cuts in health and education – cuts that force compromises in those who educate or provide well being care. A single purpose that healthcare and government regulations are complicated is since we have a complex nation,” Washington, head of the Workplace of the National Coordinator for Health IT, told Healthcare Dive. A ten year overview of the literature indicates that the predicted savings from EHR and digitalization has in no way materialized. We do not totally doubt the intent, but the end final results have been so considerably less than we want, so considerably less than the American men and women deserve, so a lot less than we know is feasible. The little practice and tiny hospital employers currently facing declining revenues had been forced to send far more cash outside and have been forced into lesser help for group members and nearby individuals. The conservative Ryan program, which is by definition dismissive of workers and non-wealthy men and women in basic, went all in with this aspect of Obamacare. But the excellent factor is that, due to the fact we – the people – developed this method in the 1st location, we have the capacity to alter it – to make things far better and to enhance well being as a result.

A ten year overview of the literature indicates that the predicted savings from EHR and digitalization has in no way materialized. We do not totally doubt the intent, but the end final results have been so considerably less than we want, so considerably less than the American men and women deserve, so a lot less than we know is feasible. The little practice and tiny hospital employers currently facing declining revenues had been forced to send far more cash outside and have been forced into lesser help for group members and nearby individuals. The conservative Ryan program, which is by definition dismissive of workers and non-wealthy men and women in basic, went all in with this aspect of Obamacare. But the excellent factor is that, due to the fact we – the people – developed this method in the 1st location, we have the capacity to alter it – to make things far better and to enhance well being as a result.